Exploring the Features and Functions of Elicit: An AI Research Assistant for Literature Reviews

This is the third blog post about using AI tools for academic purposes. In this blog post, I will explore the features and functions of Elicit, a research assistant that leverages natural language processing models like ChatGPT. The first blog post in this series was about how academics can ethically use ChatGPT to bolster their research and writing. The second blog post was about using ChatPDF to summarize and extract information from research papers quickly.

Like many of you, I am both apprehensive and astonished by the capabilities of the AI tools released in recent months. Although I remain apprehensive about what the future holds, I’ve already started using many of these tools on a daily basis to generate research ideas, learn about new research areas, and identify relevant literature. I am studying the capabilities of these tools for academic writing development, but I already know they are promising.

Let’s dive in….

What is Elicit?

According to FAQs on Elicit’s website, “Elicit is a research assistant using language models like GPT-3 to automate parts of researchers’ workflows. Currently, the main workflow in Elicit is the Literature Review. If you ask a question, Elicit will show relevant papers and summaries of key information about those papers in an easy-to-use table.”

According to one of its developers, Elicit saves researchers an average of 1.4 hours per week.

What can it do?

Elicit can help researchers:

1. Quickly locate papers on a research topic

2. Analyze and organize multiple papers (you can upload your own PDFs)

3. Summarize evidence from the top cited papers on a research topic

4. Explore and brainstorm research questions

5. Identify search terms

6. Define terms

7. Narrow or adjust your research direction

My experience using Elicit

I used Elicit to help me develop a literature review for a study on the use of ChatGPT-4 for academic writing development. This is a detailed account of my initial experience.

Note: Errors and potential issues I encountered while using Elicit are in red.

Features and Functions

When I opened Elicit, I was prompted to enter a research question. I could have also chosen “run Elicit over your own collection of papers.” I do not have any papers yet, so I skipped this step.

I asked, What is the utility of ChatGPT for academic writing development?

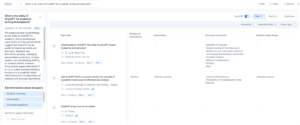

I was immediately directed to a page that displayed a table with two columns: (1) Paper title and (2) abstract summary. Each row in the table includes the paper title, authors, journal (or source if the paper is a reprint), year published, number of citations, and DOI link. A link to the full-text PDF is also included if it is available. I also noticed an image of a bar graph beside a few of the journals. If you click on the graph, you can view the journal’s impact factor (this is a great feature for novice researchers who may not know which journals to trust).

On the left-hand side of the screen, Elicit provides a “Summary of the top 4 papers,” which is in beta testing mode. Below the summary is a feature called “Add information about all papers,” which allows me to add columns for information I want to include in the table. I decided to add a column for intervention, outcomes measured, number of participants, and detailed study design. Additional options include metadata (e.g., influential citations, DOI, funding source, etc.), population studied, intervention studied, result, and methodology.

Additional features are included on the top right-hand side of the screen:

-

Has PDF: At the top right-hand side of the screen, you can click the toggle “Has PDF” to filter out papers without a full-text PDF. I usually prefer to view all papers because I can access papers behind paywalls using my university library database. However, I decided to select this filter so that I could easily compare the information in Elicit to the full-text paper.

-

Filter: You can filter by entering keywords within the abstract, published after date, and study types. The study types available include RCT, Review, Systematic Review, Meta-Analysis, and Longitudinal. I entered the keyword “ChatGPT” so that only papers with this keyword in the abstract are included in the table.

-

Sort by: You can sort by paper title, abstract summary, PDF, Year, and Citations. Search results can be exported into a CSV or bib file (more on this later). I sorted by citations to view the papers with the most citations first.

Search Results

It is not immediately clear how many papers were returned.

The “top 4 papers” summary automatically updated to include a summary of papers that were included after the filters were applied. The summary stated:

The papers provide mixed findings on the utility of ChatGPT for academic writing development. Lund (2023) and Macdonald (2023) suggest that ChatGPT can be useful for improving search and discovery, reference and information services, cataloging and metadata generation, content creation, and accelerating drafting of research articles. However, Thorp (2023) argues that ChatGPT is not an author and has limitations in science and academia. Mijwil (2023) discusses the importance of cybersecurity in protecting medical information when using ChatGPT. Overall, the papers suggest that ChatGPT has potential benefits for academic writing development, but ethical considerations and limitations need to be taken into account.

Without having reviewed the articles, I can’t be confident this summary is correct. However, if this information is accurate, this is a very useful and time-saving feature.

I selected a paper to view additional information. A pop-up screen appeared that provided additional details:

-

Abstract Summary

-

What did they test?

-

What outcomes did they measure?

-

Who were the participants?

-

Can I trust this paper?

-

Possible critiques

-

Other citations

I was able to click on each section to see where Elicit extracted the information from the paper. I opened the article PDF in a separate window and compared it to the information on the screen. I immediately noticed that the paper PDF included tables and excerpts that were not included in the Elicit preview. I then began checking the information provided by Elicit for accuracy.

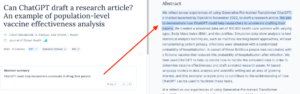

Abstract Summary

Elicit included the following sentence, “ChatGPT could help researchers accelerate drafting their papers.” I could see where this information was pulled from the paper abstract, and the paper’s aim was highlighted.

The abstract summary lacks important details, such as the use of a simulated data set to test ChatGPT’s ability to handle simulated data and draft a related research paper.

What did they test?

According to Elicit, the authors tested a fictitious vaccine.

This information is incorrect. The authors tested ChatGPT’s ability to draft a research article about vaccine effectiveness. A fictitious vaccine was included in the simulated data set but was not tested by the researchers.

What outcomes did they measure?

According to Elicit, the authors tested vaccine effectiveness and the probability of hospitalization after infection.

This information is incorrect. The authors didn’t technically “measure” an outcome. Rather, they evaluated ChatGPT’s ability to draft a research paper after being asked to handle the simulated data to determine vaccine effectiveness.

Who were the participants?

According to Elicit, participants included 100,000 healthcare workers 18 years and older.

This information is misleading, but I can see why Elicit got confused. There are no actual participants, but the authors included simulated data on 100,000 healthcare workers.

Can I trust this paper?

According to Elicit:

· The study was simulation-based (this is correct)

· Funded by not funded (the article does not mention funding)

· 100,000 participants (partially correct)

· No mention found of multiple comparisons (not applicable)

· No mention found of intent to treat (not applicable)

· No mention found of preregistration (not applicable)

Possible critiques

In this section, Elicit included the following statement, “We looked at how this paper has been cited but couldn’t find any mention of methodological flaws.” It seems that the information on how simulated data were used to test ChatGPT is causing this paper to be interpreted by Elicit as an intervention study, so traditional methodological flaws are not of concern for this paper.

Other citations

Elicit provides a list of other citations, which all seem relevant at face value. This research area is new to me, so I would need to take a deeper dive into the literature to assess the accuracy of these suggested citations.

Next, I decided to export the CSV file so that I could view all articles in a single file. However, the CSV file contained only the papers on my screen. I started to click “show more” repeatedly, but the list never seemed to end. This is problematic – how many rows are there?

I decided I needed to spend more time reading the top papers and identifying keywords so that I could further refine my search. Elicit also has features for brainstorming research questions, which may also be useful.

Key Takeaways

-

Elicit can expedite the literature search process by quickly identifying and summarizing relevant literature based on a specific research question, even if the papers don’t match the keywords. However, it is unclear how exhaustive the search results are because the number of search results is not reported. I recommend using Elicit in tandem with other literature databases.

-

Elicit can search multiple databases, including scholarly journals and conference proceedings.

-

Elicit streamlines the process of organizing papers with desired information into an easy-to-use table. Users can customize the table to include desired information such as intervention, outcomes measured, and study type. This can make it easier to manage and track papers during the writing process.

-

Elicit can produce summaries of the top-cited papers on a specific research question.

While Elicit has many useful features, there are some limitations to be aware of:

-

It is not immediately clear how many papers were returned by the search.

-

Elicit cannot access full-text papers behind a paywall, which may limit the number of sources available for a given search.

-

Elicit is not 100% accurate and can sometimes misinterpret information. Therefore, it’s important to double-check the information provided by Elicit for accuracy.

Despite its limitations, Elicit is a promising tool for literature searching that can expedite the search process and streamline the organization of search results. I recommend using Elicit in tandem with other literature databases to ensure a comprehensive search. Overall, my experience using Elicit was positive, and I look forward to exploring its potential further in future research.

Stay tuned for more on the use of Elicit and other AI tools for boosting your research and writing.